|

VERY IMPORTANT! Read And Understand These Steps Before Installation. INSPECTION & RECOMMENDED CLEANING. Inspect all parts for shipping damage before installation. If any of the parts appear damaged or questionable, DO NOT INSTALL! Contact your dealer or our facility for a replacement part or assistance. Once a product is installed.

Note

The Notebooks (preview) feature was removed on April 13 2020. The removal of the Notebooks tab and user notebook files is currently rolling out to Azure regions worldwide.

Python is a valuable tool in the tool chest of many data scientists. It's used in every stage of typical machine learning workflows including data exploration, feature extraction, model training and validation, and deployment.

This article describes how you can use the Execute Python Script module to use Python code in your Azure Machine Learning Studio (classic) experiments and web services.

Using the Execute Python Script module

The primary interface to Python in Studio (classic) is through the Execute Python Script module. It accepts up to three inputs and produces up to two outputs, similar to the Execute R Script module. Python code is entered into the parameter box through a specially named entry-point function called

azureml_main.

Input parameters

Inputs to the Python module are exposed as Pandas DataFrames. The

azureml_main function accepts up to two optional Pandas DataFrames as parameters.

The mapping between input ports and function parameters is positional:

More detailed semantics of how the input ports get mapped to parameters of the

azureml_main function are shown below.

Output return values

The

azureml_main function must return a single Pandas DataFrame packaged in a Python sequence such as a tuple, list, or NumPy array. The first element of this sequence is returned to the first output port of the module. The second output port of the module is used for visualizations and does not require a return value. This scheme is shown below.

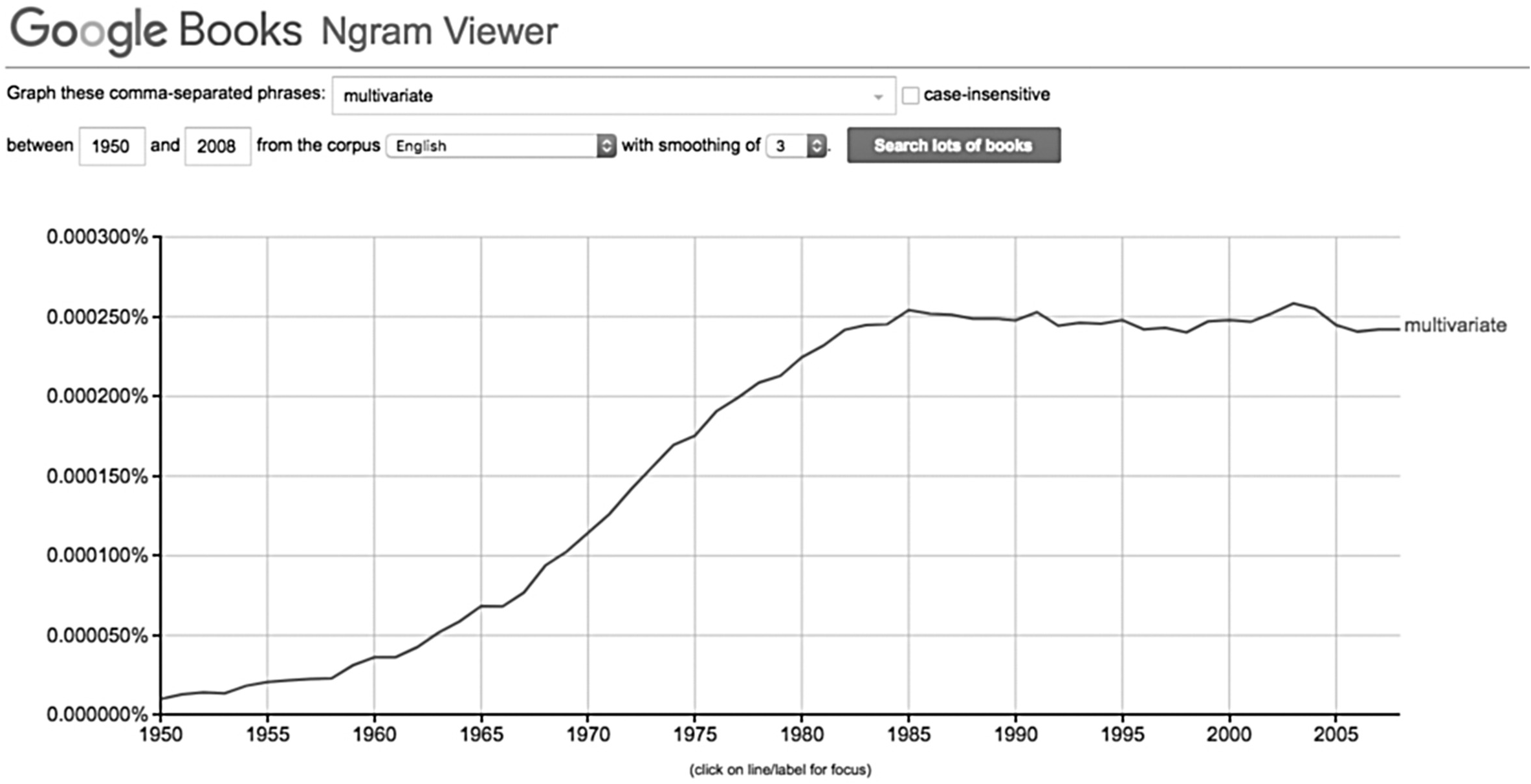

Translation of input and output data typesKey Cleaning Steps Before Generating Ngrams Work

Studio datasets are not the same as Panda DataFrames. As a result, input datasets in Studio (classic) are converted to Pandas DataFrame, and output DataFrames are converted back to Studio (classic) datasets. During this conversion process, the following translations are also performed:

*All input data frames in the Python function always have a 64-bit numerical index from 0 to the number of rows minus 1

Importing existing Python script modules

The backend used to execute Python is based on Anaconda, a widely used scientific Python distribution. It comes with close to 200 of the most common Python packages used in function.

Next, we create a file Hello.zip that contains Hello.py:

Upload the zip file as a dataset into Studio (classic). Then create and run an experiment that uses the Python code in the Hello.zip file by attaching it to the third input port of the Execute Python Script module as shown in the following image.

The module output shows that the zip file has been unpackaged and that the function

print_hello has been run.

Accessing Azure Storage Blobs

You can access data stored in an Azure Blob Storage account using these steps:

Operationalizing Python scripts

Any Execute Python Script modules used in a scoring experiment are called when published as a web service. For example, the image below shows a scoring experiment that contains the code to evaluate a single Python expression.

A web service created from this experiment would take the following actions:

Working with visualizations

Plots created using MatplotLib can be returned by the Execute Python Script. However, plots aren't automatically redirected to images as they are when using R. So the user must explicitly save any plots to PNG files.

To generate images from MatplotLib, you must take the following steps:

This process is illustrated in the following images that create a scatter plot matrix using the scatter_matrix function in Pandas.

It's possible to return multiple figures by saving them into different images. Studio (classic) runtime picks up all images and concatenates them for visualization.

Advanced examples

The Anaconda environment installed in Studio (classic) contains common packages such as NumPy, SciPy, and Scikits-Learn. These packages can be effectively used for data processing in a machine learning pipeline.

For example, the following experiment and script illustrate the use of ensemble learners in Scikits-Learn to compute feature importance scores for a dataset. The scores can be used to perform supervised feature selection before being fed into another model.

Here is the Python function used to compute the importance scores and order the features based on the scores:

The following experiment then computes and returns the importance scores of features in the 'Pima Indian Diabetes' dataset in Azure Machine Learning Studio (classic):

Limitations

The Execute Python Script module currently has the following limitations:

Sandboxed execution

The Python runtime is currently sandboxed and doesn't allow access to the network or the local file system in a persistent manner. All files saved locally are isolated and deleted once the module finishes. The Python code cannot access most directories on the machine it runs on, the exception being the current directory and its subdirectories.

Lack of sophisticated development and debugging supportKey Cleaning Steps Before Generating Ngrams Youtube

The Python module currently does not support IDE features such as intellisense and debugging. Also, if the module fails at runtime, the full Python stack trace is available. But it must be viewed in the output log for the module. We currently recommend that you develop and debug Python scripts in an environment such as IPython and then import the code into the module.

Single data frame output

The Python entry point is only permitted to return a single data frame as output. It is not currently possible to return arbitrary Python objects such as trained models directly back to the Studio (classic) runtime. Like Execute R Script, which has the same limitation, it is possible in many cases to pickle objects into a byte array and then return that inside of a data frame.

Inability to customize Python installation

Currently, the only way to add custom Python modules is via the zip file mechanism described earlier. While this is feasible for small modules, it's cumbersome for large modules (especially modules with native DLLs) or a large number of modules.

Key Cleaning Steps Before Generating Ngrams FreeNext stepsKey Cleaning Steps Before Generating Ngrams 1

For more information, see the Python Developer Center.

Comments are closed.

|

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed